Deployment Strategies

ML models deployed as a real-time service support an advanced traffic management tool called variations. The tool allows you to perform a canary release of a new model or to shadow deploy a model.

A variation is an additional identifier through which you can manage the amount of traffic routed to a specific deployed build. You can deploy multiple builds per model by assigning builds to different variations.

Shadow deployment lets you test a model using production data without returning the predictions produced by the model to the caller. The Qwak platform still logs all requests and model responses, so you can review them and evaluate the model performance.

Deploying a Real-Time Model with Variations

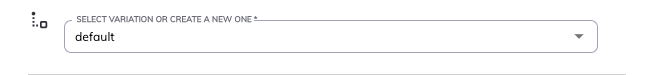

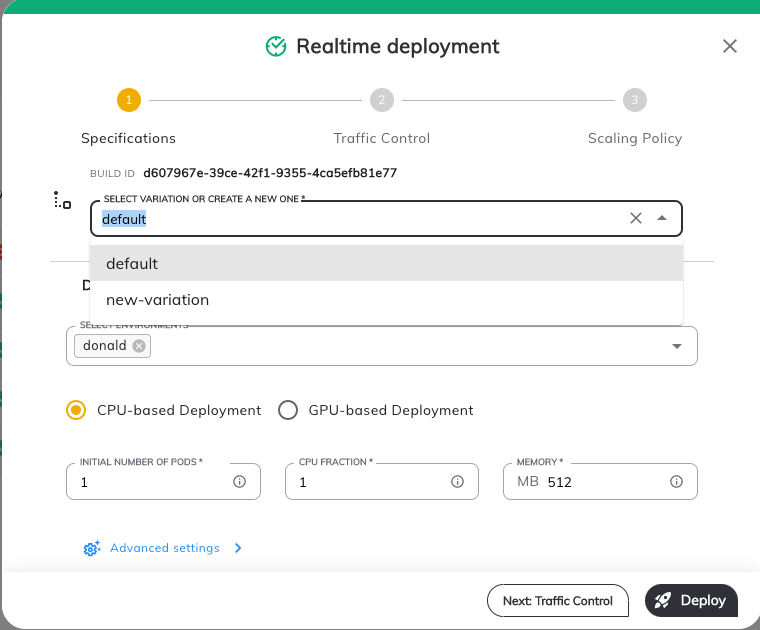

When you deploy your first model, by default the variation name will be "default".

The first build automatically receives 100% of the traffic.

Deploying an Additional Variation

To deploy an additional variation first you must create an audience.

Then you will be able to attach it to the model in the deployment process.

When deploying an additional variation you are prompted to enter a variation name. Choose a name that adheres to the following rules:

- Contains no more than 36 characters

- Contains only lowercase alphanumeric characters, dashes or periods.

- Starts with an alphanumeric character.

- Ends with an alphanumeric character.

To deploy an additional variation:

- Click the deploy button next to the build you want to deploy.

- In the deployment popup, enter a new variation name and click create new variation in the select.

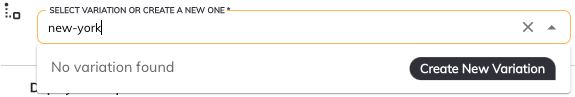

- In the tab Traffic Control attach the new or the existing variations to the audience.

- Ensure that the total traffic percentages add up to 100% in each audience.

- Select a fallback variation. (can be either the new one or the existing one).

- Click Deploy.

Replacing an Existing Variation

To replace an existing variation:

- In the deployment popup, choose the variation you want to replace.

- Modify the deployed model. You can replace any variation, including the default variation. The next tab of Traffic Control also allows you to modify the percentage of traffic.

Deployment via the CLI

To deploy a model with variations via the CLI, use this command:

qwak models deploy realtime --from-file <config-file-path>

The configuration file should look like this:

model_id: <model-id>

build_id: <build-id-to-deploy>

realtime:

variation_name: <The variation name being deployed>

audiences:

- id: <The audience id>

routes:

- variation_name: <First varition>

weight: 20

shadow: false

- variation_name: <The variation name being deployed>

weight: 80

shadow: false

fallback_variation: <One of the variations>

- If you use

--variation-namein the CLI command, you don't have to pass thevariation_namein the configuration file. - When you deploy your first build, you don't have to pass any variation-related data. You can also pass just the variation name parameter. Traffic is automatically adjusted to 100%.

- If you are using the configuration file, you must pass all the existing variations, regardless of whether you modify them or not.

Inference on a Specific Variation

You can run an inference against a specific variation by using one of the clients:

Python Runtime SDK

import pandas as pd

from qwak_inference import RealTimeClient

model_id = "test_model"

feature_vector = [

{

"feature_a": "feature_value",

"feature_b": 1,

"feature_c": 0.5

}]

client = RealTimeClient(model_id=model_id, variation="variation_name")

response: pd.DataFrame = client.predict(feature_vector)

Java Inference SDK

RealtimeClient client = RealtimeClient.builder()

.environment("env_name")

.apiKey(API_KEY)

.build();

PredictionResponse response = client.predict(PredictionRequest.builder()

.modelId("test_model")

.variation("variation_name")

.featureVector(FeatureVector.builder()

.feature("feature_a", "feature_value")

.feature("feature_b", 1)

.feature("feature_c", 0.5)

.build())

.build());

Optional<PredictionResult> singlePrediction = response.getSinglePrediction();

double score = singlePrediction.get().getValueAsDouble("score");

REST API

curl --location --request POST 'https://models.<environment_name>.qwak.ai/v1/test_model/<variation_name>/predict' \

--header 'Content-Type: application/json' \

--header 'Authorization: Bearer <Auth Token>' \

--data '{"columns":["feature_a","feature_b","feature_c"],"index":[0],"data":[["feautre_value",1,0.5]]}'

Targeting A Specific Audience

PythonClient

You can run an inference against a specific audience by using the python sdk:

client.predict(feature_vector, metadata: {<key>:<value>})

You need to pass a key-value dictionary, its value will lead the traffic to the wanted audiences by its conditions.

REST API

curl --location --request POST 'https://models.<environment_name>.qwak.ai/v1/test_model/<variation_name>/predict' \

--header 'Content-Type: application/json' \

--header 'Authorization: Bearer <Auth Token>' \

--header "<key>: <value>" \

--data '{"columns":["feature_a","feature_b","feature_c"],"index":[0],"data":[["feautre_value",1,0.5]]}'

Updated about 1 year ago